The World Cup potato chip bags look like a xenomorph with an underbite. It creeps me out.

The World Cup potato chip bags look like a xenomorph with an underbite. It creeps me out.

I took my wife and kid to an outdoor swap meet for a few hours around lunchtime today, and now I’m reminded that the sun hates me and wishes to smite me at every chance.

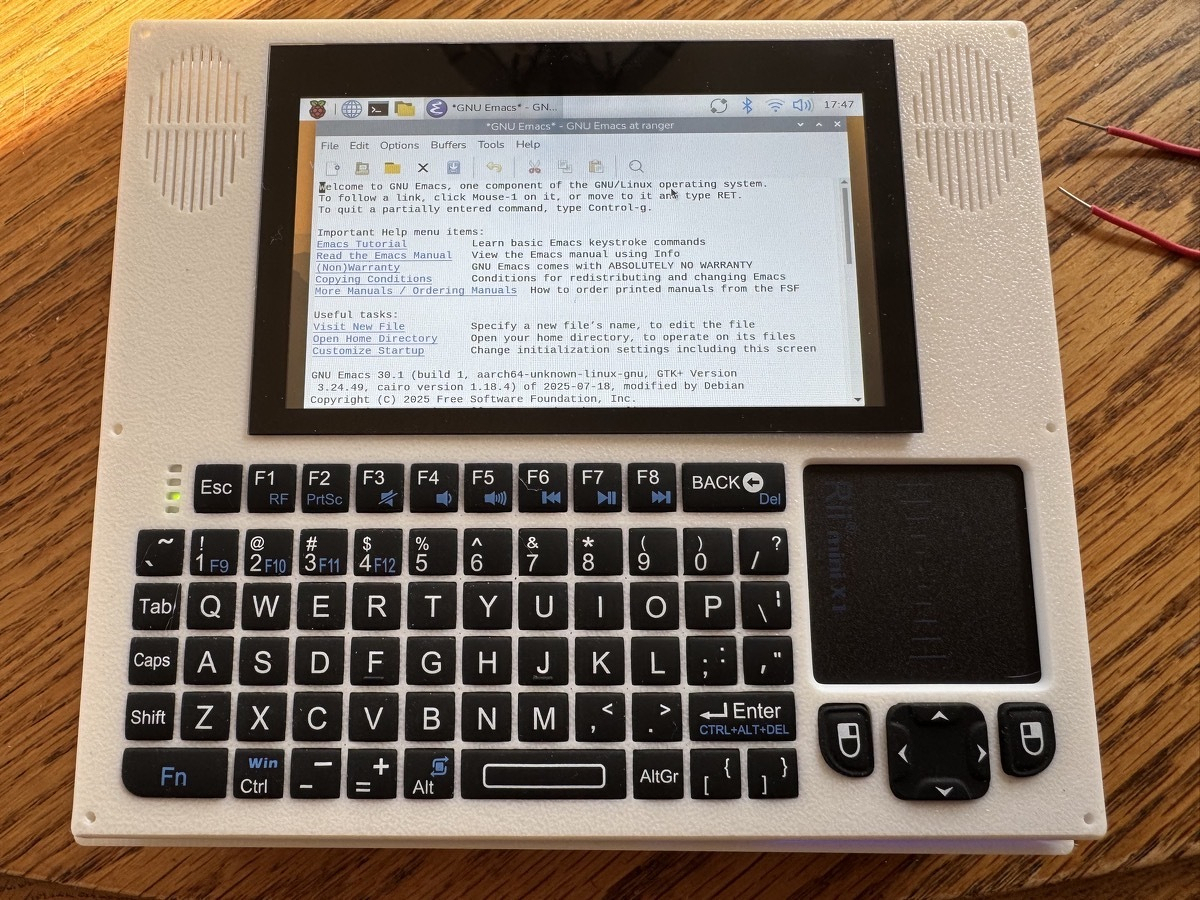

“You wouldn’t download a laptop, would you?”

“I’m saddened that you so completely misunderstand me.”

I have, in fact, downloaded a laptop, printed it, and installed a Free operating system.

In Argentina, U.S. Tech Billionaire Peter Thiel Finds An Escape - The New York Times:

The billionaire’s new roots in Argentina are said to be partly motivated by concerns about the future of the United States and shared beliefs with Argentina’s right-wing leader.

Don’t let the proles hit you on the ass on the way out, just kidding, let them for all I care.

I’m going to use jj instead of git for the next 2 weeks. Hold me to this.

Today’s agenda:

5:15AM: Up for a video call with Eastern Europe. (Context: I’m in California.)

7AM: Commuting to work.

4PM: Leaving work.

5PM: Meeting up with the backpack cult for a 5 mile weight-carrying hike around the Golden Gate Park and beaches a bridge area.

8PM: Commuting home.

9PM: Animal Crossing until I fall asleep harvesting coconuts.

After Town Bans Flock, Councilmember Crashes Out, Proposes Internet and Phone Ban

“How many more meetings is it going to take before we understand the community didn’t vote for this? They don’t want it. How many more times are the cameras going to have to get cut down before somebody realizes it’s not worth the money?"

I bought Hoka Clifton shoes a while back because they have a reputation at being good for walking. I disagree. They’re fantastic for standing. They’re delightful for strolling. They’re sucky for covering distance, though.

The soles are just too soft. There’s no support. Scurrying a mile to make a bus connection? Plan on rolling your ankle along the way and for having achy shins. I would truly rather wear hardsoled boots for making a long or fast trip.